Pierre Renard

Cloud technologies addict. Pierre enjoys making awesome cloud-based solutions.

Mounting an S3 Bucket on Windows and Linux

Wouldn’t it be perfect to be able to use Amazon S3 as any local folder on your machine? It is all this blog post is about. We will see how to mount Amazon S3 Buckets on both Linux and Windows.

Amazon Simple Storage Service (Amazon S3) is an object storage service that is secure, efficient and highly available. Customers can use it to store and protect an unlimited number of files for multiple use cases, such as websites static resources, mobile applications, backup and restore, archiving, enterprise applications and big data analytics. Amazon S3 provides easy-to-use management features so we can organize our data and configure access control.

Table of Contents

- Mounting an S3 bucket on Windows Server 2016

- Mounting an S3 bucket on a Linux Ubuntu 18.04

- Conclusion

Mounting an S3 bucket on Windows Server 2016

Mounting an S3 bucket on Windows is not easy. There are several commercial softwares which can help us mont an S3 Bucket. In this blog post, we will use rclone, an open source command line tool to manage files on cloud storages. rclone can mount any local, cloud or virtual filesystem as a disk on Windows and Linux.

Launch the Windows Server 2016 in the cloud

First, we need to launch a Windows Server 2016 EC2 instance with access to internet and associated with an instance Profile which grants the instance access to our S3 Bucket. The following IAM policy gives the minimum rights to access the bucket we want to mount (for this example, we will use a bucket named tmp-prenard).

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"s3:ListBucket",

"s3:DeleteObject",

"s3:GetObject",

"s3:PutObject",

"s3:PutObjectAcl"

],

"Resource": [

"arn:aws:s3:::tmp-prenard/*",

"arn:aws:s3:::tmp-prenard"

]

},

{

"Effect": "Allow",

"Action": "s3:ListAllMyBuckets",

"Resource": "arn:aws:s3:::*"

}

]

}

To mount an S3 bucket on a on-premise Windows Server we have to work with AWS Credentials (see Understanding and getting your AWS credentials for more information).

Mount the S3 bucket on the Windows instance

On the Windows instance.

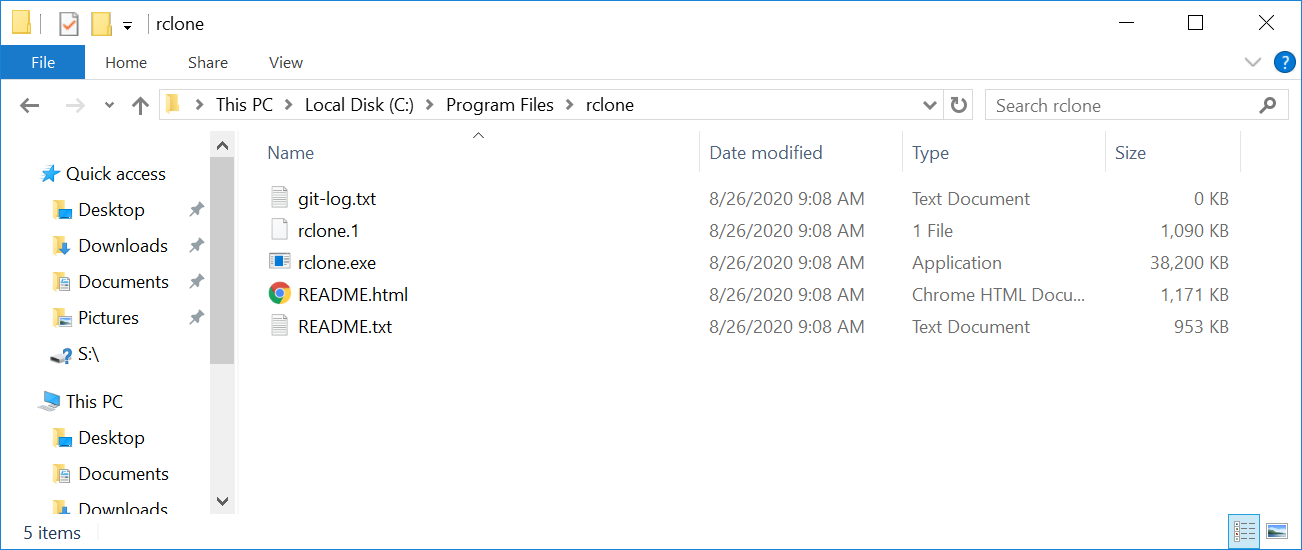

- download the latest version of rclone and unzip the archive.

-

create a directory under

c:\Program Files\rcloneand copy the content of the archive in this directory.

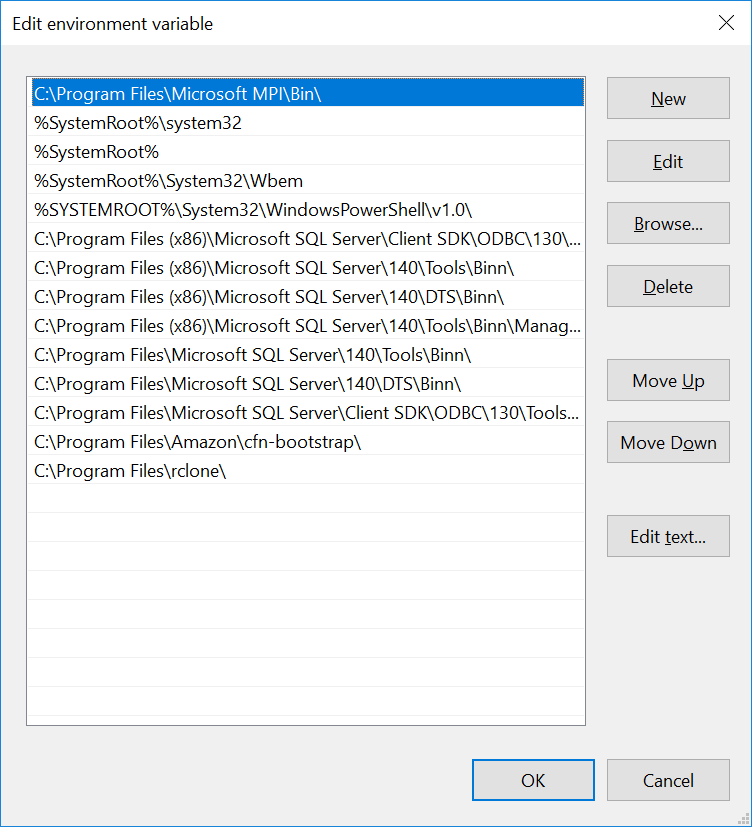

-

add the path of

rclone(C:\Program Files\rclone\) in the path Windows environment variables.

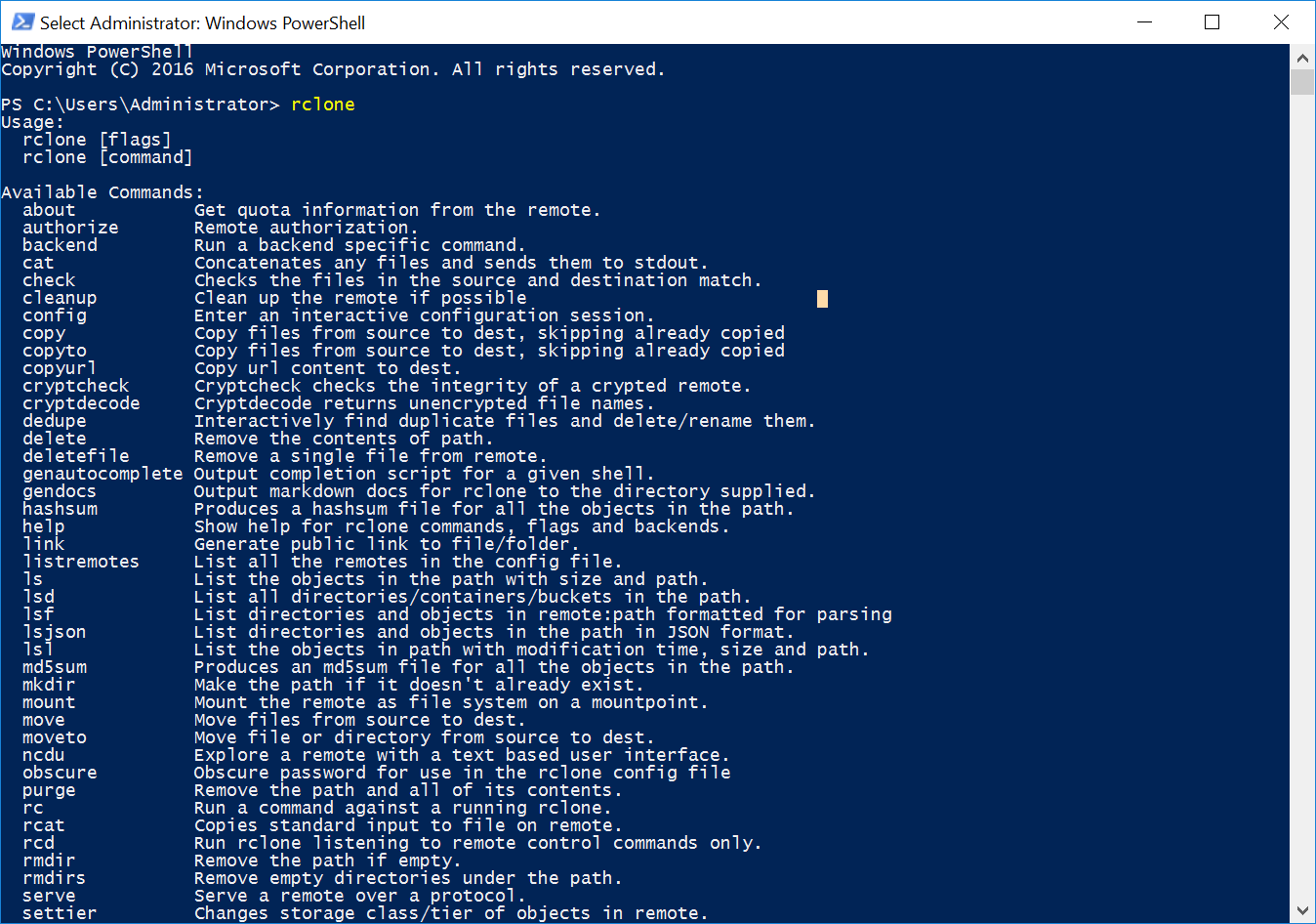

In a Powershell console, if we type rclone we can see all the available commands:

Now that we have installed rclone, we will continue with the configuration by typing rclone config:

-

select

nfor a new remote configuration.1 2 3 4 5 6 7 8 9 10

Windows PowerShell Copyright (C) 2016 Microsoft Corporation. All rights reserved. PS C:\Users\Administrator> rclone config 2020/08/26 09:22:37 NOTICE: Config file "C:\\Users\\Administrator\\.config\\rclone\\rclone.conf" not found - using defaults No remotes found - make a new one n) New remote s) Set configuration password q) Quit config n/s/q> n

-

give a name to the connection (e.g.

aws_s3)1

name> aws_s3

-

select

4for “Amazon S3 Compliant Storage Provider”.1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18

Type of storage to configure. Enter a string value. Press Enter for the default (""). Choose a number from below, or type in your own value 1 / 1Fichier \ "fichier" 2 / Alias for an existing remote \ "alias" 3 / Amazon Drive \ "amazon cloud drive" 4 / Amazon S3 Compliant Storage Provider (AWS, Alibaba, Ceph, Digital Ocean, Dreamhost, IBM COS, Minio, etc) \ "s3" 5 / Backblaze B2 \ "b2" 6 / Box \ "box" [...] Storage> 4 ** See help for s3 backend at: https://rclone.org/s3/ **

-

select

1for the S3 provider.1 2 3 4 5 6 7 8 9

Choose your S3 provider. Enter a string value. Press Enter for the default (""). Choose a number from below, or type in your own value 1 / Amazon Web Services (AWS) S3 \ "AWS" 2 / Alibaba Cloud Object Storage System (OSS) formerly Aliyun \ "Alibaba" [...] provider> 1

-

select

2to use AWS credentials from the Instance Profile we defined earlier.1 2 3 4 5 6 7 8 9

Get AWS credentials from runtime (environment variables or EC2/ECS meta data if no env vars). Only applies if access_key_id and secret_access_key is blank. Enter a boolean value (true or false). Press Enter for the default ("false"). Choose a number from below, or type in your own value 1 / Enter AWS credentials in the next step \ "false" 2 / Get AWS credentials from the environment (env vars or IAM) \ "true" env_auth> 2

-

leave blank

access_key_idandsecret_access_key, by pressingEntertwice.1 2 3 4 5 6 7 8

AWS Access Key ID. Leave blank for anonymous access or runtime credentials. Enter a string value. Press Enter for the default (""). access_key_id> AWS Secret Access Key (password) Leave blank for anonymous access or runtime credentials. Enter a string value. Press Enter for the default (""). secret_access_key>

-

select the AWS region in which the S3 bucket has been created (

eu-west-1in this example).1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28

Region to connect to. Enter a string value. Press Enter for the default (""). Choose a number from below, or type in your own value / The default endpoint - a good choice if you are unsure. 1 | US Region, Northern Virginia or Pacific Northwest. | Leave location constraint empty. \ "us-east-1" / US East (Ohio) Region 2 | Needs location constraint us-east-2. \ "us-east-2" / US West (Oregon) Region 3 | Needs location constraint us-west-2. \ "us-west-2" / US West (Northern California) Region 4 | Needs location constraint us-west-1. \ "us-west-1" / Canada (Central) Region 5 | Needs location constraint ca-central-1. \ "ca-central-1" / EU (Ireland) Region 6 | Needs location constraint EU or eu-west-1. \ "eu-west-1" / EU (London) Region 7 | Needs location constraint eu-west-2. \ "eu-west-2" / EU (Stockholm) Region [...] region> 6

-

leave blank all the remaining fields, as we do not use any “special” configuration such as endpoint or specific ACL.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105

Endpoint for S3 API. Leave blank if using AWS to use the default endpoint for the region. Enter a string value. Press Enter for the default (""). endpoint> Location constraint - must be set to match the Region. Used when creating buckets only. Enter a string value. Press Enter for the default (""). Choose a number from below, or type in your own value 1 / Empty for US Region, Northern Virginia or Pacific Northwest. \ "" 2 / US East (Ohio) Region. \ "us-east-2" 3 / US West (Oregon) Region. \ "us-west-2" 4 / US West (Northern California) Region. \ "us-west-1" 5 / Canada (Central) Region. \ "ca-central-1" 6 / EU (Ireland) Region. \ "eu-west-1" 7 / EU (London) Region. \ "eu-west-2" 8 / EU (Stockholm) Region. \ "eu-north-1" 9 / EU Region. \ "EU" 10 / Asia Pacific (Singapore) Region. \ "ap-southeast-1" 11 / Asia Pacific (Sydney) Region. \ "ap-southeast-2" 12 / Asia Pacific (Tokyo) Region. \ "ap-northeast-1" 13 / Asia Pacific (Seoul) \ "ap-northeast-2" 14 / Asia Pacific (Mumbai) \ "ap-south-1" 15 / Asia Pacific (Hong Kong) \ "ap-east-1" 16 / South America (Sao Paulo) Region. \ "sa-east-1" location_constraint> Canned ACL used when creating buckets and storing or copying objects. This ACL is used for creating objects and if bucket_acl isn't set, for creating buckets too. For more info visit https://docs.aws.amazon.com/AmazonS3/latest/dev/acl-overview.html#canned-acl Note that this ACL is applied when server side copying objects as S3 doesn't copy the ACL from the source but rather writes a fresh one. Enter a string value. Press Enter for the default (""). Choose a number from below, or type in your own value 1 / Owner gets FULL_CONTROL. No one else has access rights (default). \ "private" 2 / Owner gets FULL_CONTROL. The AllUsers group gets READ access. \ "public-read" / Owner gets FULL_CONTROL. The AllUsers group gets READ and WRITE access. 3 | Granting this on a bucket is generally not recommended. \ "public-read-write" 4 / Owner gets FULL_CONTROL. The AuthenticatedUsers group gets READ access. \ "authenticated-read" / Object owner gets FULL_CONTROL. Bucket owner gets READ access. 5 | If you specify this canned ACL when creating a bucket, Amazon S3 ignores it. \ "bucket-owner-read" / Both the object owner and the bucket owner get FULL_CONTROL over the object. 6 | If you specify this canned ACL when creating a bucket, Amazon S3 ignores it. \ "bucket-owner-full-control" acl> The server-side encryption algorithm used when storing this object in S3. Enter a string value. Press Enter for the default (""). Choose a number from below, or type in your own value 1 / None \ "" 2 / AES256 \ "AES256" 3 / aws:kms \ "aws:kms" server_side_encryption> If using KMS ID you must provide the ARN of Key. Enter a string value. Press Enter for the default (""). Choose a number from below, or type in your own value 1 / None \ "" 2 / arn:aws:kms:* \ "arn:aws:kms:us-east-1:*" sse_kms_key_id> The storage class to use when storing new objects in S3. Enter a string value. Press Enter for the default (""). Choose a number from below, or type in your own value 1 / Default \ "" 2 / Standard storage class \ "STANDARD" 3 / Reduced redundancy storage class \ "REDUCED_REDUNDANCY" 4 / Standard Infrequent Access storage class \ "STANDARD_IA" 5 / One Zone Infrequent Access storage class \ "ONEZONE_IA" 6 / Glacier storage class \ "GLACIER" 7 / Glacier Deep Archive storage class \ "DEEP_ARCHIVE" 8 / Intelligent-Tiering storage class \ "INTELLIGENT_TIERING" storage_class>

-

finally, skip the advanced configuration by typing

n. We get a summary of the configuration. If everything is fine we can typey. Otherwisento restart the configuration process.1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30

Edit advanced config? (y/n) y) Yes n) No (default) y/n> n Remote config -------------------- [aws_s3] type = s3 provider = AWS env_auth = true region = eu-west-1 -------------------- y) Yes this is OK (default) e) Edit this remote d) Delete this remote y/e/d> y Current remotes: Name Type ==== ==== aws_s3 s3 e) Edit existing remote n) New remote d) Delete remote r) Rename remote c) Copy remote s) Set configuration password q) Quit config e/n/d/r/c/s/q> q

rclone setup is now complete. Unfortunately, we are not done yet. If we try to mount the bucket by typing: rclone mount <configuration_name>:<bucket_name> we get an error.

1

2

3

4

5

6

7

8

9

10

11

12

13

PS C:\Users\Administrator> rclone mount aws_s3:tmp-prenard S: --vfs-cache-mode full

panic: cgofuse: cannot find winfsp

goroutine 10 [running]:

github.com/rclone/rclone/vendor/github.com/billziss-gh/cgofuse/fuse.(*FileSystemHost).Mount(0xc0002123c0, 0xc00003a0c0,

0x2, 0xc000450000, 0x11, 0x14, 0x0)

github.com/rclone/rclone/vendor/github.com/billziss-gh/cgofuse/fuse/host.go:573 +0x66d

github.com/rclone/rclone/cmd/cmount.mount.func1(0xc0002123c0, 0xc00003a0c0, 0x2, 0xc000450000, 0x11, 0x14, 0x1d5f860, 0x

c0001bc380, 0xc000188300)

github.com/rclone/rclone/cmd/cmount/mount.go:168 +0x74

created by github.com/rclone/rclone/cmd/cmount.mount

github.com/rclone/rclone/cmd/cmount/mount.go:166 +0x3da

PS C:\Users\Administrator>

In order to make rclone work we need winfsp, an open source Windows File System Proxy which makes it easy to write user space filesystems for Windows. It provides a FUSE emulation layer which rclone uses in combination with cgofuse.

To get rid of this error, we need to download and install winfsp-1.7.20172.msi.

After that we can try again to mount the bucket on the instance.

1

2

3

4

5

Windows PowerShell

Copyright (C) 2016 Microsoft Corporation. All rights reserved.

PS C:\Users\Administrator> rclone mount aws_s3:tmp-prenard S: --vfs-cache-mode full

The service rclone has been started.

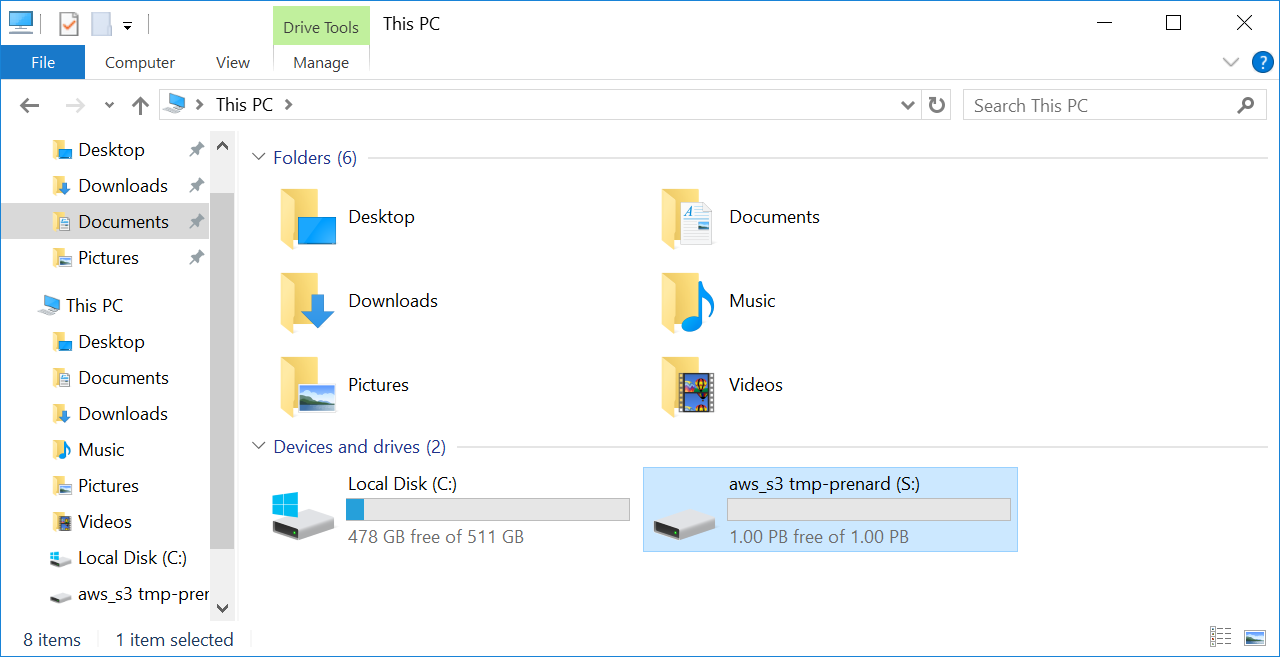

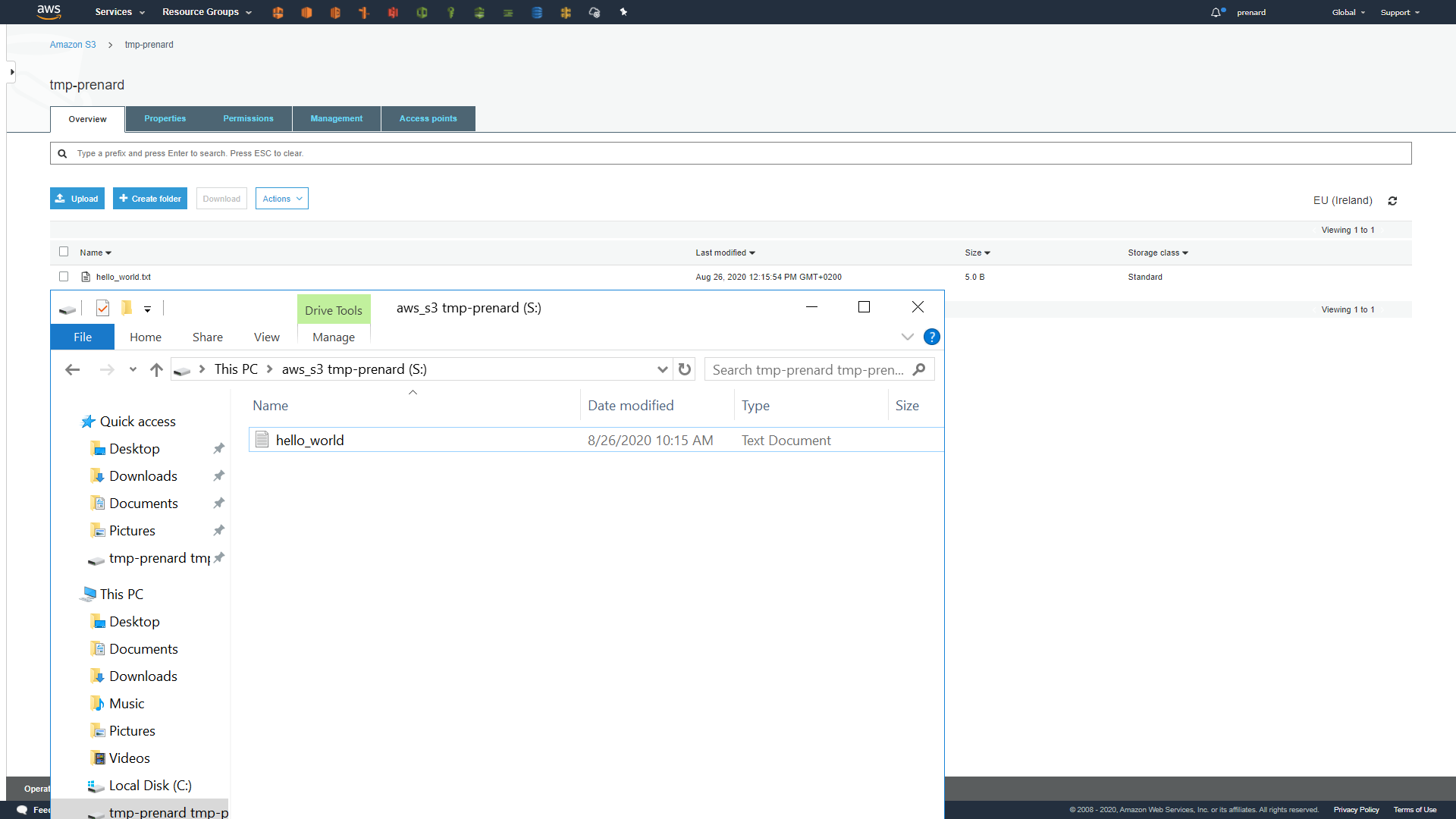

We can now see a new disk mounted on the instance. Let’s drop a file at the root of the disk to see if everything is working.

Wonderful, it’s working! We can see that the synchronization is working between the instance and the S3 bucket.

It is worth mentioning that it is also possible to specify a Bucket prefix after the Bucket name in the rclone mount command to mount only what is under this prefix.

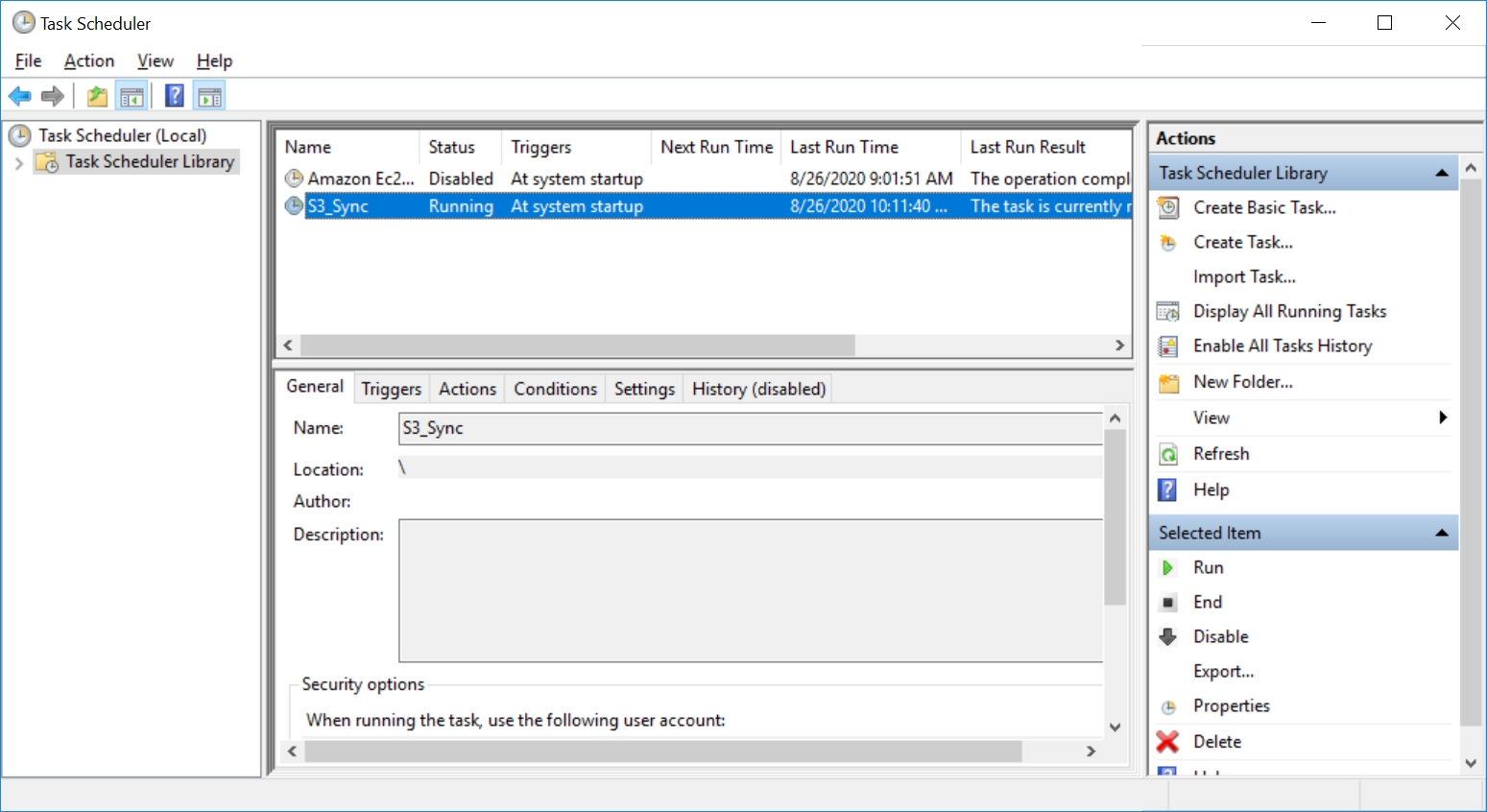

Automate everything!

Unfortunately, nothing is automated, and we still need to execute the same command when the instance is started. Here are some PowerShell commands for the creation of a Windows Scheduled Tasks that will be executed at startup.

1

2

3

4

5

$time = New-ScheduledTaskTrigger -AtStartup

$action = New-ScheduledTaskAction -Execute PowerShell.exe -Argument '-WindowStyle Hidden -Command "rclone mount aws_s3:tmp-prenard s: --vfs-cache-mode full --allow-other"'

$setting = New-ScheduledTaskSettingsSet -ExecutionTimeLimit 0

Register-ScheduledTask -TaskName "S3_Sync" -Action $action -RunLevel Highest -Trigger $time -Settings $setting

Start-ScheduledTask -TaskName "S3_Sync"

Mounting an S3 bucket on Linux Ubuntu 18.04

Mounting an S3 Bucket on Linux is a lot easier. We will use s3fs, which allows Linux and macOS to mount an S3 bucket via FUSE. s3fs preserves the native object format for files, allowing use of other tools like AWS CLI.

For this example, we will use an Ubuntu 18.04 AMI and launch an instance with the same instance profile we used for Windows.

Here is the policy needed to access our S3 Bucket from the instance.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"s3:ListBucket",

"s3:DeleteObject",

"s3:GetObject",

"s3:PutObject",

"s3:PutObjectAcl"

],

"Resource": [

"arn:aws:s3:::BUCKET_NAME/*",

"arn:aws:s3:::BUCKET_NAME"

]

},

{

"Effect": "Allow",

"Action": "s3:ListAllMyBuckets",

"Resource": "arn:aws:s3:::*"

}

]

}

Once the instance is up and running, we can simply

- install

s3fswithapt - create a directory

/mnt/s3as mount point - mount the S3 bucket on the previously created directory

1

2

3

apt-get install -y s3fs

mkdir /mnt/s3

s3fs tmp-prenard /mnt/s3 -o iam_role

By executing a ls command we can now see the file created previously:

1

2

$ ls /mnt/s3/

hello_world.txt

Conclusion

Amazon S3 is already a great service that provides flexibility, durability, availability and ease of use. In this blog post, we demonstrated that we can use an S3 bucket as a filesystem on both Windows and Linux, which can help us centralize data or prepare a backup plan by saving data in the mounted S3 bucket at a lower cost than an Amazon EFS or Amazon FSx.